AI Is Both a Shock and a Stress

What a $35 Trillion Warning Tells Us About Infrastructure

AI is supposed to solve infrastructure’s problems.

It’s about to expose them.

Harvard economist Gita Gopinath is warning that $35 trillion in wealth could evaporate if systems built around AI prove more fragile than we thought. If you’ve managed critical infrastructure, the pattern feels familiar. Rapid innovation layered onto aging systems. Data trusted without validation. Governance deferred until after something breaks.

The most resilient utilities of the next decade won’t be the biggest or the most digital. They’ll be the most coherent.

The Stress AI Lands On

Utilities aren’t starting from strength.

The average water main in the U.S. is 45 years old. Wastewater treatment plants built in the 1970s still run on original equipment. Every deferred replacement, every “we’ll get to it next budget cycle” decision compounds. The backlog grows. The risk accumulates.

Half the utility workforce is retirement-eligible within a decade. The knowledge walking out the door was never documented. How this pump behaves in winter. Why that valve sticks. Which contractor actually shows up. It lives in people’s heads.

Climate projections change faster than capital planning cycles. PFAS limits. Lead service line replacement. Microplastics monitoring. Every new regulation adds complexity and cost.

The infrastructure investment gap is measured in trillions. Political capacity to close it moves in millions.

The math doesn’t work.

Now add AI to this stack.

AI doesn’t just add to the list. It magnifies every fragility already present. Optimization models trained on historical failure rates can’t predict novel failure modes in 80-year-old pipes. Automation replaces institutional knowledge faster than training programs can rebuild it.

When financial markets face a shock, they can halt trading or inject liquidity. Infrastructure systems don’t have that luxury. When a pump fails, when a permit lapses, when a storm overwhelms capacity, there’s no pause button.

The Stormwater Test

Stormwater systems are among the most complex, least visible, and most underfunded infrastructure in the U.S. Hydrology. Hydraulics. Regulatory compliance. Asset management. Finance. All interconnected. All dependent on data that rarely exists in clean, integrated form.

Now imagine deploying an AI tool to “optimize” stormwater infrastructure.

What does that even mean?

Does it prioritize flood risk reduction? Water quality? Cost efficiency? Equity? Is it trained on local rainfall data or regional averages? Does it account for climate projections or just historical patterns? Can it distinguish between a pipe that’s structurally sound but hydraulically undersized?

Does it understand that a detention pond isn’t just an asset, but a regulatory compliance mechanism tied to a permit that expires in five years?

Here’s where the problem becomes visible.

The AI needs clean rainfall data. But the rain gauge network has gaps. Some gauges failed years ago and were never replaced. Historical data exists in multiple formats across different systems.

The AI needs accurate asset condition data. But inspection records are incomplete. Asset IDs are inconsistent between GIS and CMMS. Condition ratings were assigned by different inspectors using different criteria over 20 years.

The AI needs integrated regulatory context. But permit requirements have changed three times in the past decade. Compliance monitoring data lives in a separate database. And the engineer who understood how all the pieces fit together? Retired last year.

If that data is inconsistent, the AI won’t flag the gaps. It will work around them.

The recommendations will look authoritative. Fast. Detailed. Complete with risk scores and confidence intervals.

They’ll be wrong in ways that won’t become obvious until the next 100-year storm or the next audit.

Reality vs. Marketing

When utilities deployed AI-driven predictive maintenance, many discovered their historical data was sparse, inconsistent, or unusable.

Most needed 6-12 months just to establish baseline equipment behavior. Achieving reliable predictions typically took 1-2 years from project kickoff.

Not the “plug and play” vendors promised.

False alarms eroded trust. Maintenance crews started ignoring warnings. No system achieved the 99% accuracy in marketing materials. A realistic expectation: 50-70% reduction in unplanned outages. Significant, but not the transformation leadership expected.

Contrary to marketing claims that “the AI does it all,” successful implementations required seasoned operators and maintenance engineers deeply involved. Training. Validating. Guiding the AI system.

AI augments human judgment. It doesn’t replace the need for skilled personnel who understand why equipment might be failing.

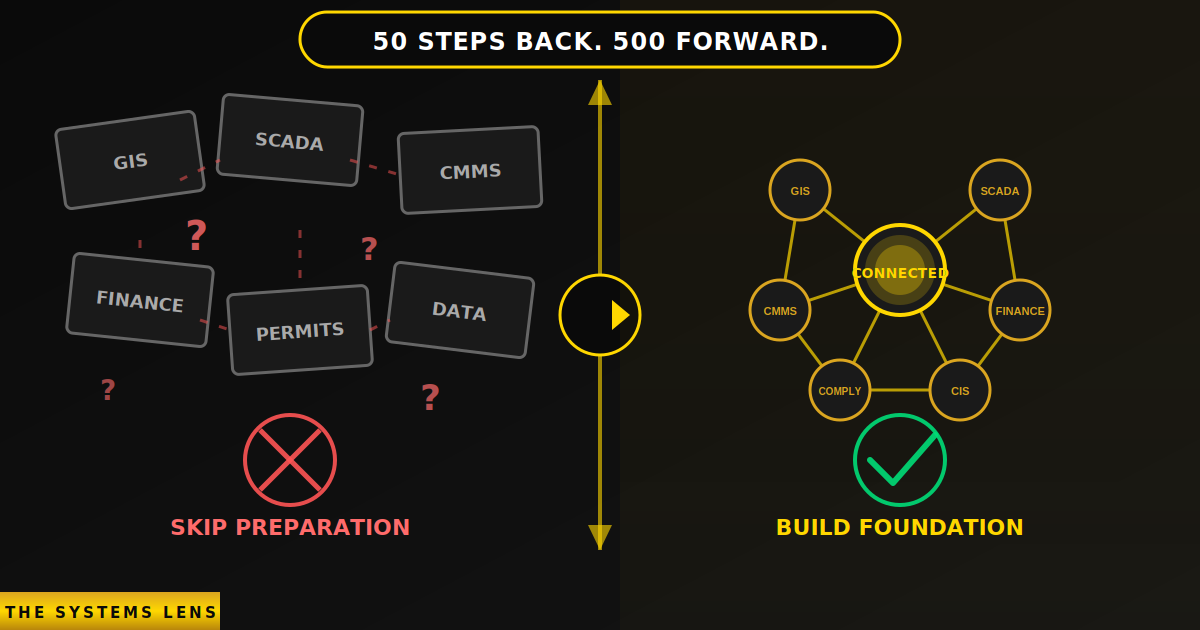

The 50/500 Principle

Years ago, I sat in a boardroom in Miami-Dade. We were managing $9 billion in capital programs under a consent decree. The pressure was relentless. Build. Deliver. Comply.

And I said something that didn’t land well.

“We should take 50 steps back before we go 500 forward. What should we be doing now so we don’t end up in our fourth or fifth consent decree five years from now?”

That question didn’t fit the agenda. The agenda was compliance. Not transformation.

The external pressure today is the same. Move fast. Regulators expect AI-enabled compliance. Ratepayers expect efficiency. Vendors promise transformation. The message: adopt AI now or get left behind.

The internal reality: fragmented systems, inconsistent data, stretched capacity, limited governance bandwidth.

Deploy AI into that environment without addressing the underlying fragility, and you don’t get transformation. You get amplified dysfunction at the speed of automation.

The 50/500 Principle: Take 50 steps back to go 500 steps forward.

Data Integrity First

Before you automate anything, audit your data.

Are asset IDs consistent across GIS, CMMS, and financial systems? Do inspection records match physical assets? Are failure codes standardized and meaningful? Does historical data reflect actual conditions, or just what got logged?

This work is unglamorous. Expensive. Requires cross-departmental coordination. It surfaces problems that have been ignored for years.

But without it, every AI tool you deploy inherits those problems and scales them.

This donkey work is crucial.

Workforce Readiness

AI will change jobs. How it changes them depends on whether you prepare your workforce or just automate around them.

Preparation means identifying which roles will be augmented versus replaced. Creating pathways for people to transition into new roles. Data analysts. AI system monitors. Governance specialists. Giving staff latitude to experiment with AI tools in low-stakes environments. Building feedback loops so operators can flag when models are wrong.

You can’t bolt AI training onto existing workloads and expect adaptation. You need dedicated time for learning, experimentation, and skill development.

That’s hard. But the alternative is what creates the chaos Gopinath warns about.

Governance Before Deployment

AI governance answers three questions for every tool:

What decision is this making, and is that a decision we want automated?

What data is it using, and have we validated that data?

Who is accountable when it’s wrong, and how do we detect errors in real time?

Most AI deployments skip these questions because they feel like bureaucratic friction. But they’re the only thing standing between “AI-enabled optimization” and “automated liability.”

What 500 Steps Forward Looks Like

When you take the 50 steps back, AI becomes something else entirely.

You predict failures accurately because your models are trained on validated data. You optimize operations intelligently because your workforce understands why the AI recommends certain actions and can override when context demands it. You demonstrate compliance confidently because every automated decision is traceable, auditable, and tied to accountable humans. You make capital planning defensible because your asset data, financial models, and risk assessments are interoperable and transparent.

That’s 500 steps forward. More resilient systems instead of just faster processes.

Skip the preparation? You get authoritative-looking recommendations that compound bad data into worse decisions. Automation becomes a liability shield. A way to deflect accountability without improving outcomes.

Regulators don’t accept “the algorithm said so” as justification for permit violations.

Regional Governance or Regional Fragility

You can’t solve AI governance in isolation.

If AI displaces 15% of technical staff across a metropolitan region, individual utilities can’t absorb that shock alone. Where do those workers go? Who retrains them?

Every utility in a watershed affects the others. Upstream discharge affects downstream water quality. Shared aquifers don’t respect jurisdictional boundaries.

If every utility’s data is siloed, incompatible formats, inconsistent taxonomies, proprietary platforms, regional planning becomes impossible.

If 10 utilities in a region all adopt the same AI platform, trained on similar datasets, using the same vendor’s model architecture, you haven’t diversified risk. You’ve synchronized it. One model error propagates across all 10 systems.

That’s not governance. That’s chaos with documentation.

The alternative: treat data infrastructure as a regional public good. Shared data standards. Regional data trusts. Coordinated procurement with interoperability requirements built into vendor contracts.

Someone has to take the mantle.

The Hard Question

When your next capital plan assumes AI will optimize asset management, streamline compliance, or improve forecasting, who in the room is asking what happens when the model is wrong?

Who’s asking whether the workforce is ready? Whether the data is validated? Whether accountability is defined?

If no one is asking, you’re inheriting risk.

And when that risk materializes, when the pump fails, the permit lapses, the forecast misses, the rate case stumbles, you won’t be able to blame the algorithm.

The algorithm was never the problem.

The system was.

Unlike financial markets, infrastructure systems don’t get bailouts. They just fail. Slowly. Incrementally. Irreversibly.

Until someone takes 50 steps back to fix the foundations.

The utilities that do that work now will be the ones still operating a decade from now.

Three Things That Matter

1. Data integrity before AI deployment. You can’t automate your way out of data debt. If your GIS doesn’t match your CMMS, if your inspection records are incomplete, AI will codify those problems at scale.

2. Workforce readiness is not optional. AI requires more skilled capacity, not less. People to interpret, validate, and correct models. Automate first and deal with workforce disruption later is what creates the chaos.

3. Governance means accountability. For every AI tool, answer this: Who is responsible when it’s wrong? If no one can answer, you’re not ready to deploy.

AI is both a shock and a stress.

A shock because it demands immediate adaptation. Deploy now or fall behind. It disrupts workflows, displaces workers, forces decisions at speed.

A stress because it requires sustained investment in exactly the capacities utilities have been deferring for decades. Data infrastructure. Workforce development. Governance frameworks. Regional coordination.

But this is also an opportunity.

Using AI to fix the data debt inside our systems, not just to automate it, lets us finally build infrastructure that is intelligent, accountable, and resilient.

The outcome will depend not on the intelligence of our models, but on the integrity of our systems.

Are you inheriting risk or building resilience?